AI gets it wrong all the time

And I think that's good for us.

AI is fast. It will generate a response quicker than we could ever research or write it ourselves. Confident. Prolific. Also wrong. A lot.

I think that might be the point.

We’ve all met that person. The one at the table who loves the sound of their own voice so much they’ll say anything to be heard. Nonsense delivered with total conviction. Dangerous, whether it’s coming from a machine or a person.

AI can be that “guy”. Fluent. Certain. Occasionally completely wrong.

The difference is, we’re not obliged to nod along.

I’m two weeks into my Master’s Program, and I can already see what the first module is designed to achieve. Spin us around. Disorient us on purpose.

We were tasked with sharing a project proposal with AI, then setting it parameters to come back with how that project would be executed through past decades. I chose the nineties. A decade where AI was barely a whisper. Rule-based responses. No generative anything. A decade where what we’re asking for simply didn’t exist yet.

We were forcing it to get it wrong.

And then we had to go and check. Research the decade ourselves. Find out whether the references it offered were real, whether the technology it described actually existed, whether the project would have been possible at all.

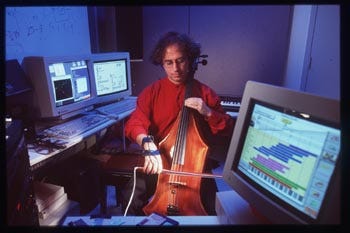

Here’s the beautiful thing. Through challenging it, through chasing down the threads it handed me, my project got better. AI helped me discover people making things I didn’t know existed in the nineties. It opened doors I didn’t know were there. Guitar Hero. Tod Machover and his team basically invented it, off the back of projects designed to let anyone make music. Hyperinstruments. (That’s another post in itself.)

My original idea was this: turn my life drawings into music. The lines. The shapes. The pressure of the charcoal. Inspired by Daphne Oram, who I wrote about last week. She drew sound in the sixties. I wanted to see what that could look like now.

The research cracked it open.

What I found wasn’t just a way to translate a finished drawing into sound. It was a way to keep me in the room. Live. In session. My actual movements, my pressure on the paper, my body in the act of drawing, all of it feeding into the music as it happens. A collaboration in real time. The technology responding to me, not just to what I’ve made.

That’s the gap. That’s where it lives.

Take me out of the picture. Take my humanity out. And emotion can’t be translated. It becomes a process. Technically fine. Emotionally flat. If we’re not feeling anything, what’s the point?

AI will give you the list. It will give you options, references, frameworks. What it can’t do is tell you whether any of it is right. Whether it’s actually what you want to be making. Its taste levels are often off. Its instincts don’t exist. It can get caught in a loop, start referencing itself, hallucinating. The moment we switch off and let it take the wheel, we end up somewhere we really don’t want to go.

But that gap between what it outputs and what we’re looking for? That’s where our authorship lives. That’s where the work actually happens. The checking. The questioning. The moment you say, no, not that, and you go digging for something truer.

AI can give me a system to turn drawings into music. What it can’t do is be me, drawing. The mess of it. The hesitation. The pressure that changes when I’m uncertain or when I’m sure. That’s mine. That’s the bit it can’t replicate.

And I want to protect that.

Mind blown, so cool.